AI Chatbots and Adolescent Mental Health, Reflections on Sophie Klose’s Master’s thesis (Webster University)

Marcia Banks, Coordinator of English Publications, Le Pôle

Reflections on Sophie Klose’s Master’s thesis (Webster University, May 2025), An Analysis of Mental Health AI Chatbots in Simulated Counselling Contexts for Adolescents and Young Adults

It is from the perspective of an educator, reflecting on both research and lived experience, that Sophie Klose’s 2025 thesis resonates. Her work examines how effective AI chatbots are in addressing depression, suicidal ideation, and self-harm, and raises important questions for schools and educators who are increasingly encountering this reality.

Rates of anxiety, depression, self-harm, and suicidal ideation continue to rise during adolescence. According to Sophie Klose’s thesis, many young people are turning to AI chatbots for advice and emotional support, often before reaching out to a trusted adult. In many ways, this is not surprising.

A review by the World Health Organization (2024) indicates that one in seven children aged 10–19 lives with a mental disorder, and that suicide is the second leading cause of death among 10–19-year-olds in Europe. Self-harming behaviours are often established as early as ages 12–13. Early identification and intervention are therefore critically important, as adolescents who experience a first episode of major depressive disorder are more likely than others to experience mental health difficulties later in life.

Although Sophie Klose does not focus explicitly on schools or their role in early identification, it is clear that schools sit at the centre of this reality, as so much of a child’s and adolescent’s life is spent there. Mental health concerns often emerge not as crises, but as gradual changes: withdrawal, fatigue, irritability, or shifts in engagement and academic performance. Educators and school-based professionals are not therapists, yet they are frequently the first to notice when something is not quite right.

Educators are aware of the importance of fostering a sense of belonging and connection within the school environment; protective factors that matter profoundly for adolescent wellbeing.

Why Adolescents Turn to AI Chatbots

AI is part of today’s children’s lives. Students use AI for schoolwork, and, as Sophie Klose’s research suggests, increasingly for advice and emotional support. AI is immediately accessible, responds quickly and offers a sense of privacy. There is no fear of disappointing someone, no need to manage another person’s emotional reaction, and no uncertainty about whether one’s concerns are “serious enough.”

Stigma, fear of judgment, long waiting lists to see a therapist or counsellor, and uncertainty about how to begin a conversation about how they are feeling can discourage adolescents from seeking professional help. In this context, AI becomes an appealing alternative.

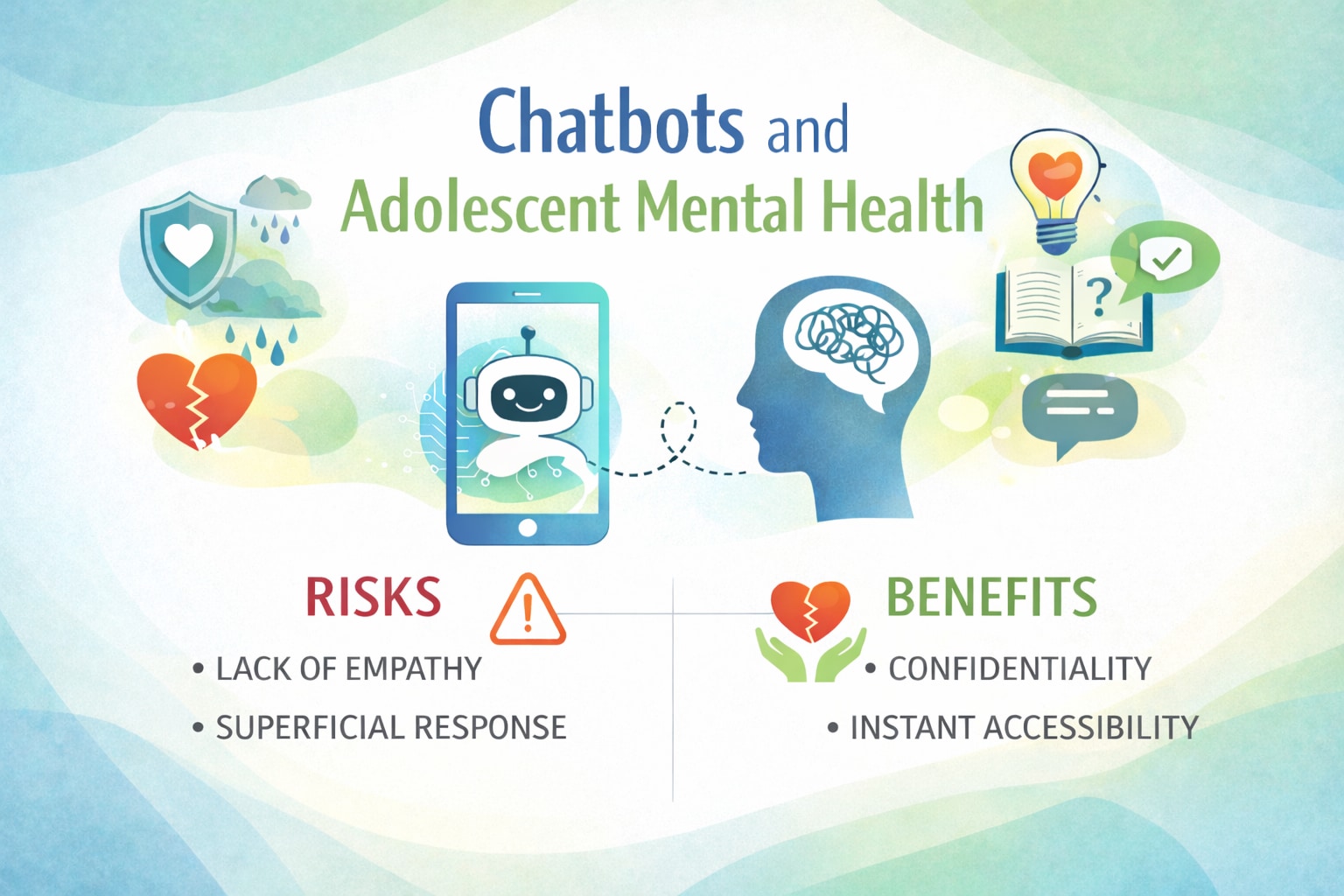

Potential Benefits of AI Chatbots

When used appropriately and with clear boundaries, AI chatbots may offer limited but meaningful support. They can provide psychoeducation about mental health, helping to identify certain mental illnesses and their symptoms, suggesting general treatment pathways and basic coping strategies.

AI chatbots may also help some young people identify early signs of mental health difficulties and articulate the concerns the adolescents feel unable to express to a therapist or trusted adult. For some, this may even serve as a first step toward seeking help.

However, as Sophie Klose makes clear, these tools come with important limitations.

Concerns and Risks

- Lack of true empathy: Chatbots can simulate empathy but do not possess emotional attunement, clinical judgment, or professional insight.

- Generic responses: Advice is often broad and insufficiently tailored to complex, individual situations, remaining superficial in its therapeutic capacity.

- False sense of support: Adolescents may feel temporarily “helped” and delay seeking appropriate human or professional care.

- Over-attachment and dependency: Emotional reliance on chatbots can increase isolation and reduce real-world connections. As Sophie Klose notes, this risk is “especially relevant for adolescents who are developmentally more vulnerable to forming intense emotional attachments with relational agents” (p. 108).

- Illusion of intimacy: Emotionally rich language may create the impression of a genuine relationship, a particular risk for developmentally vulnerable adolescents.

The use of AI tools for mental health purposes carries the risk of masking distress rather than addressing it. Chatbots cannot replace the depth, responsiveness, and relational complexity of human support. This brings up a question posed by Sophie Klose:

“Are we fostering healing, or are we conditioning users to seek seamless, affirming responses, potentially weakening their capacity to cope with the natural friction and demands of real-life human relationships?” (p. 111)

The most effective protective factors for young people remain unchanged: trusted relationships, a sense of belonging, attentive adults, and timely support.

As digital technologies continue to evolve, schools must remain thoughtful and grounded. AI may assist, but it cannot replace the presence, empathy, and relational depth that adolescents ultimately need. As educators, we know that what changes young lives is not perfection or constant affirmation, but presence, patience, and relationship. Programs that foster collaboration, creativity, agency, and connection, such as the arts, problem-solving initiatives, and creative learning programs, are powerful supports for mental health and wellbeing.

Sophie Klose’s thesis highlights both the promise and the limits of AI chatbots in adolescent mental health contexts. AI may offer an entry point for young people who are not yet ready to speak to an adult. Yet healing happens when students feel seen, taken seriously, and supported by people who notice and who stay.